Exciting news! TCMS official website is live! Offering full-stack software services including enterprise-level custom R&D, App and mini-program development, multi-system integration, AI, blockchain, and embedded development, empowering digital-intelligent transformation across industries. Visit dev.tekin.cn to discuss cooperation!

Ask AI about a book that doesn't exist, and it will produce a detailed review complete with author, publisher, chapters, and key arguments. A 2023 Nature study confirmed that AI fabricates academic references that look perfectly formatted but don't exist. The implications are serious: AI-generated fake papers are already appearing in academic ecosystems, with fabricated citations potentially becoming accepted as real sources.

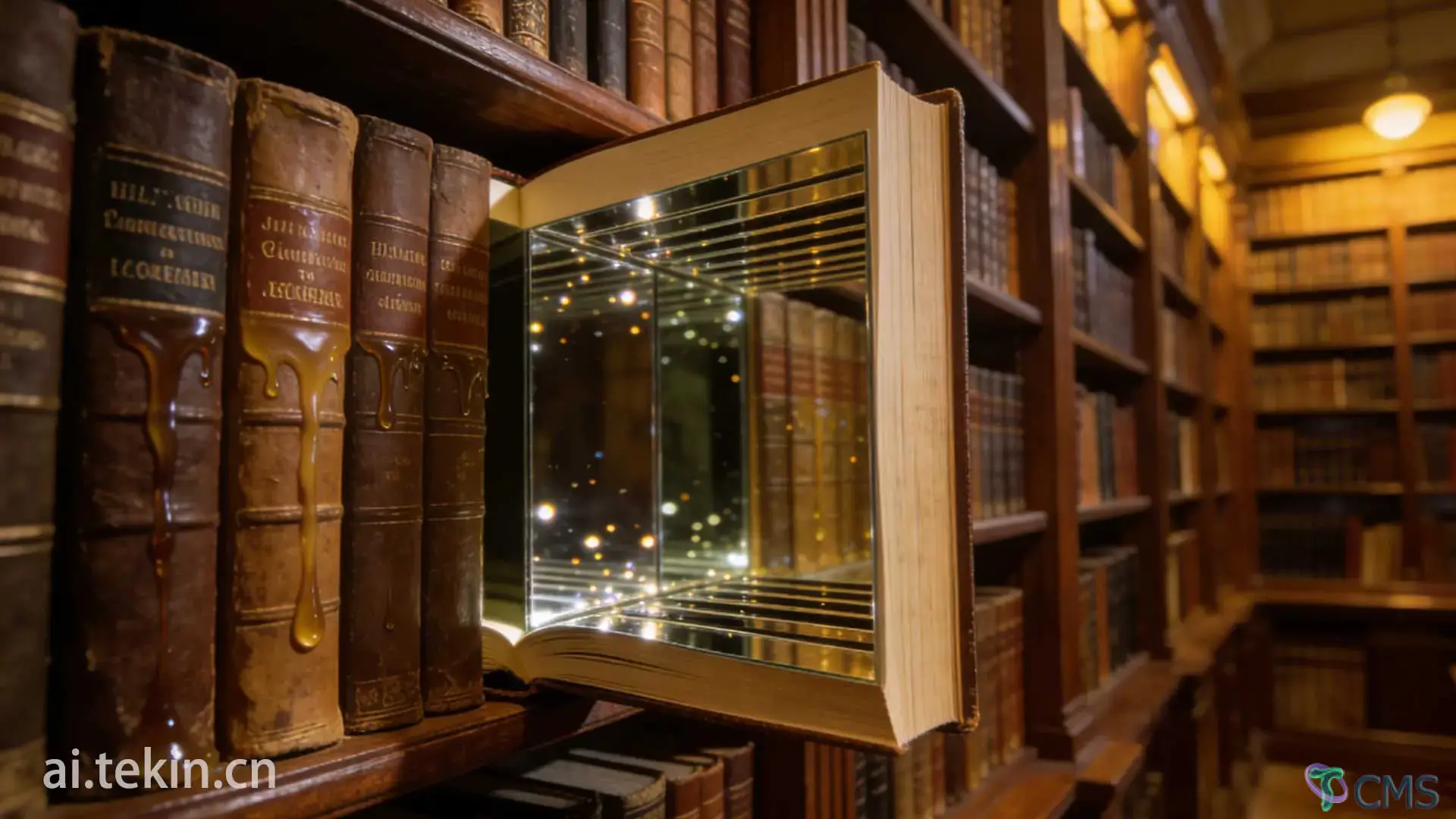

Opening Insight

AI doesn't know whether this book exists, but it knows "what a book should look like."

So you give it a title, and it can fabricate an entire "book" for you.

AI's "book-making ability" isn't coincidence—it's an underlying mechanism. It's just applying templates.

You may have tried: Asking AI to introduce a book you casually made up. It will immediately give you: author, publisher, publication year, summary, table of contents, core arguments for each chapter, even "reader reviews" and "academic impact."

You'll think it "actually read this book."

But the truth is:

It's not citing a book—it's generating a book.

This isn't an isolated case—it's a common characteristic of AI. In 2023, Nature published a study specifically investigating AI's "hallucination" phenomenon—researchers found that AI will fabricate references and book citations that don't exist in the real world at all. These fabricated citations look perfectly formatted and detailed—but simply don't exist.

During training, AI read massive amounts of book-related content: book reviews, tables of contents, abstracts, paper citations, reading notes, e-book fragments, publication information.

It didn't learn "knowledge"—it learned "structure."

A book typically contains: topic, author background, chapter structure, argument development, case support, conclusion and elevation.

So when you give it a book title, it will automatically complete:

"All the elements a book should have."

CABI (Centre for Agriculture and Bioscience International) research points out: AI will "derive" ghost references based on user prompts, perfectly matching user needs, whether they really exist or not. You want something, it "creates" it for you—correct format, correct content, just doesn't exist.

Because it learned: Chapter titles are usually noun phrases, chapter order usually goes from "concepts → cases → methods → conclusions," each chapter has "logical progression," each chapter title should "look professional, deep, and structured."

So it will generate:

These structures are very "real-looking," but could be completely fake.

AI doesn't know whether this book has a Chapter 3; it only knows "humans typically write about Chapter 3 when introducing books"—so it wrote it.

Because it learned: Summaries usually contain "background + problem + method + value," writing style is usually "professional + general + slightly exaggerated," and humans like to use "This book will take you..." when writing summaries.

So it will generate: "This book deeply explores...", "The author analyzes from a unique perspective...", "This book is suitable for readers interested in..."

These sentence patterns appear very frequently in language patterns, so it generates them naturally.

A National Taiwan University Library analysis in 2023 pointed out: ChatGPT-generated references are "hard to distinguish real from fake"—some citations actually exist, some are completely fabricated, but all have formats that perfectly conform to academic standards. Users have difficulty distinguishing which are real and which are fake.

Because it learned: Each chapter needs "one central idea," each chapter needs "one logical chain," each chapter needs "one case or argument."

So it will automatically complete: "This chapter mainly discusses...", "The author believes...", "Through case XX, this chapter illustrates..."

This isn't "understanding"—it's a "narrative template."

AI isn't summarizing book content—it's generating "what humans typically say when summarizing books."

Because it learned: Theories are usually composed of "definition + principle + application," humans like to use abstract nouns to construct theories, and language patterns can make fictional theories look "very professional."

So it can generate (hypothetical example): "Emotional Dynamics Model," "Cognitive Flow Framework," "Structured Behavior Path."

These sound impressive, but might not exist at all.

Rolling Stone warned in a 2025 report: AI is creating a "fake academic ecosystem"—non-existent papers, non-existent books, non-existent research are threatening human knowledge systems themselves. When AI-fabricated content is disseminated as real citations, falsehoods become "consensus."

Because it learned: Authors usually have "education + research direction + institution," more specific author backgrounds are more credible, and humans like to bind "professionalism" with "authority."

So it will complete: "Professor at X University," "Research direction is XX," "Previously worked at X institution."

This information might be completely fabricated, but language patterns make them "look reasonable."

A user on Zhihu shared their experience: Asking ChatGPT to recommend references, the papers given had "correct journal names, correct years, correct page numbers, but checking the official site—they don't exist at all." AI isn't "lying"—it's just generating "what looks most like a reference."

AI's strength lies in: It can mimic book structures, mimic author tones, mimic academic styles, construct self-consistent logical chains, and fill in massive details.

So what you see isn't a "real book," but a:

"Language-pattern-driven fictional book."

But it looks very real.

A GPTZero investigation found: At the NeurIPS conference (a top AI conference), about half of papers containing "hallucinated citations" were themselves highly suspected of being AI-generated. AI fabricates citations → paper gets accepted → becomes a new "academic source"—falsehoods are self-propagating.

Now we can see more clearly the fundamental difference between two logics:

Human understanding of books:

AI generation of books:

When you mistake "language generation" for "content understanding," you think AI "has read this book." But actually, it's just "fabricating" according to templates, never having seen the real book.

AI isn't "introducing a book"—it's "generating a book's language structure."

It's not telling you the truth—it's telling you:

"Humans would typically write a book this way."

Understanding this, we can:

Understanding AI's "book hallucination" is the only way to truly understand its boundaries.

This is Article 7 of the series "The Misalignment of Intelligence: The Underlying Logic of ai-hallucination" target="_blank">AI Hallucination."

Next: "Human Logic vs. AI Logic: The Fundamental Difference Between Two Types of Intelligence"

—Why are human and AI "intelligence" fundamentally different things?

Understanding underlying logic is the first step to understanding the age of intelligence.